|

I used to believe the future was in our children’s hands. That today’s problems can be solved by those little hands. But some organizations are generating very powerful technology, so fast, that our children are already receiving the impact of it. At the same time most adults are struggling to figure out what’s happening and if it’s even a problem. The future, and our children's future is in our hands. Technology can be distributed extremely fast and with the lack of regulations, that distribution happens mostly without our consent. We hardly understand what's included in the new updates of our phone, apps or software, where and to whom our data is being sent, or what are the design biases of the technology. But we now understand these are questions we can ask. At the same time that we are invaded with all this data collection and privacy abused, we have the possibility to learn so much online. More and more learning communities are sharing their know-hows and opening their discoveries to the public. We have the power to understand what’s happening, to participate, share our opinion, organize and change our reality. But that journey has a rocky road. For the last 10 years I have been dedicated to improve children’s education. But a few weeks ago I started a Spanish speaking gathering for adults to talk about AI. I’m fairly new to AI, but creating the conditions for learning is my gift. I was lucky enough to be selected as an OpenAI scholar and one of my goals is to share what I’m learning. So, I posted an invite on FB for an AI gathering, many friends my age responded, but few of them came, the people that came and are still coming are my parent’s friends (60+ years old). Most of AI resources I found, are not designed for that population even when they participate politically (70-80% of them vote in the US) and they represent ~20% of the total voting population in the US and Europe. This is the beginning of a projects that studies what would be the curriculum for this population, what they want to know and how deep they want to go. So far we have play with Weakinator, learn about how different types of Machine Learning methods works, what are some uses of AI today and a few of the ethical concerns we should be aware of (thanks to my hero Cathy O'Neil and Well Santo for the inspiration). All the participants have very clear questions regarding AI: Which jobs will AI take, how can I prepare my grandchildren, what can AI do, how can I use it, how does it work, what's up with the self-driving cars, does AI means humans are obsolete? Working with them is really inspiring, none of the starting participants have missed the meet-up yet. This is a population that probably prefer gathering than online information.

Another adult-learning project I’m working on in parallel, is to explore with educators ways to teach AI to children and to think how AI is changing how, why and what children need to learn. I co-host with Stefania Druga (good friend and expert in AI-tools for education), a gathering for educators at the FabLearn conference at Columbia University NYC this last Saturday. Educators came together and talked about AI, and tested some AI learning tools. Educators are a great population to think how to use AI to support human development instead of just using it for profit, finding ways to use tools for learning is their everyday life. I showed the unicorn example of the language model that OpenAI partially released a few weeks ago. One of the questions I asked was: How could we use a model like this? These are some of the very interesting comments they said (thanks to James Dec and Corinne Takara for some of these comments):

We can all understand AI because it's about learning and intelligence, something we are all familiar with. Together we can think of many ways to use it and regulate it. We need to create more access points to it, but my prediction is that getting into AI will be many more times easier than getting into coding because... IT'S SO MUCH MORE EXCITING!!!

1 Comment

So... why should you care about AI? (Of course besides the movie generated fear that one day "robots" will take over humanity). Well, this is why I care (I'm sure I'll keep adding to the list but for now):

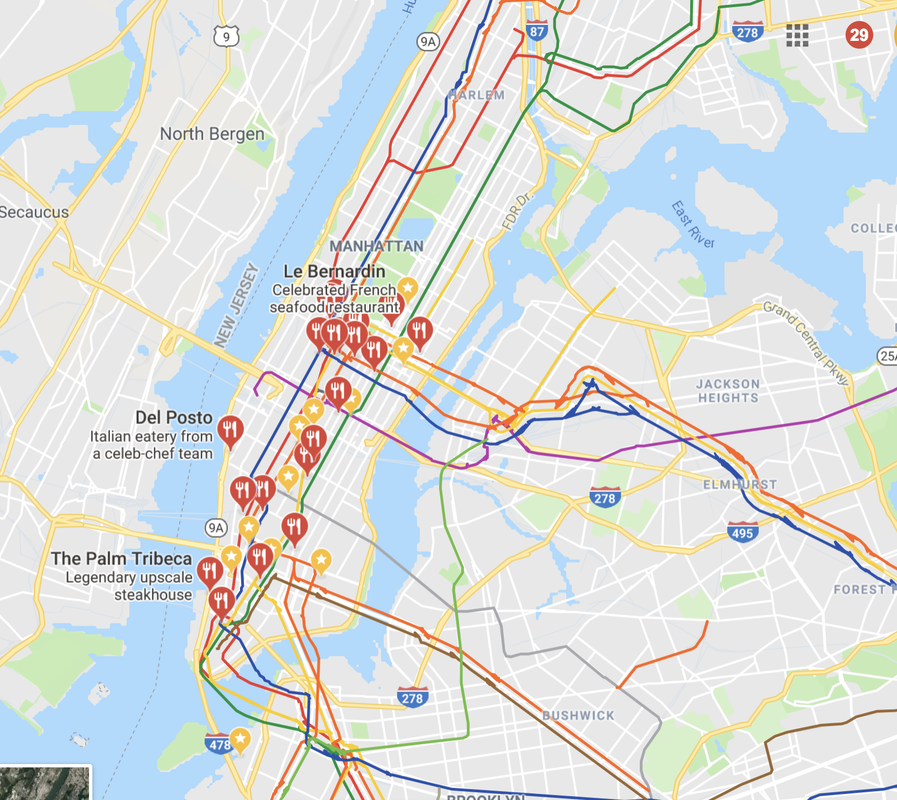

Humans have created different mechanisms for a machine to learn, most popular are: unsupervised learning UL, supervised learning SL and reinforcement learning RL. Unsupervised Learning is used to learn a structure of a data set, and this is analogous of us finding patterns on a certain experience. We use this type of learning a lot, an example can be when we see a map of a new place we have never been in and we are trying to make sense of it. We can identify areas with a lot of restaurants, that are well connected through public transportation, areas with gardens, residential areas, etc. Nothing is explicitly labeling those areas for us but we use our pattern matching to learn about the layout of the map and find how the different features of it (green areas, restaurants, transportation) are distributed on the map and what patterns they form. Supervised Learning learns features that are associated with a label, you might be very familiar with it. It's basically the favorite way many schools like to teach. For example: you may have to learn about the parts of the digestive system and learn to classify each of it by it's name (label). Later your teachers will give you a diagram of the digestive system without labels and ask you to correctly identify each part and label it. Finally Reinforcement Learning learns to maximize rewards in an specific environment. For example like with poker, someone can tell you the rules of it but you need to play it in order to experience winning and losing. Then you can actually learn what are the best strategies to maximize winning. |

RSS Feed

RSS Feed